Research

Research Overview: Safe, Agile, and Interactive Robotics

The ultimate goal of our research is to develop provably safe autonomous robotic systems that can adapt to and interact with the world in the way that human beings do, so that they can better serve, assist, and collaborate with people in their daily lives across work, home, and leisure....

Read More

Period of Performance: Now ~ Now

Generative Assembly via Bimanual Manipulation

Overview: Assembly is a critical category of tasks in contemporary manufacturing. In particular, we focus on high-mix, low-volume (HMLV) assemblies, which are highly customizable, often involving complex operations, such as Lego assembly. Creating an HMLV assembly product is generally time-consuming due to two key stages: 1) designing the assembly and...

Read More

Sponsor: CMU Manufacturing Futures Institute

Period of Performance: 2023 ~ Now

Point of Contact: Ruixuan Liu

Humanoid Robots: Safety and Dexterity

Overview: Humanoid robots have been rapidly advancing in recent years. Their human-like structure enables them to assist with tasks in a manner similar to humans and even learn dexterous skills through human demonstration. However, the high dimensionality of humanoid systems presents significant challenges. At the Intelligent Control Lab, our mission...

Read More

Period of Performance: 2023 ~ Now

Hierarchical Temporal Logic Specifications for Robotic Tasks

Overview: Conventional robotic planning, whether task or motion planning, primarily focuses on guiding a robot from an initial state to a target state while avoiding unsafe scenarios. However, with advancements in robotic technology, robots are increasingly expected to perform more complex tasks that go beyond simple point-to-point objectives. For example,...

Read More

Period of Performance: 2023 ~ Now

Point of Contact: Xusheng Luo

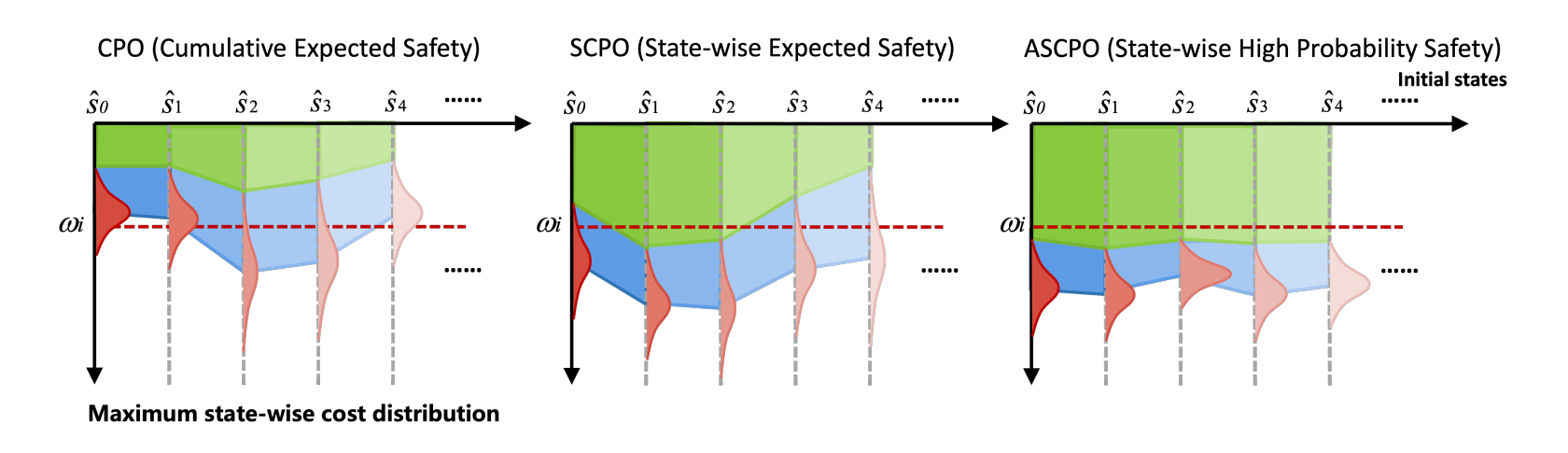

State-wise Safe and Robust Reinforcement Learning for Continuous Control

Overview: Robotic safety has been a focal point of research in both the control and learning communities over the past few decades, addressing a wide range of safety constraints from specific, narrow tasks to more general, broad applications. Achieving provable safety guarantees at every time step (state-wise safety or robustness)...

Read More

Period of Performance: 2023 ~ Now

Point of Contact: Weiye Zhao

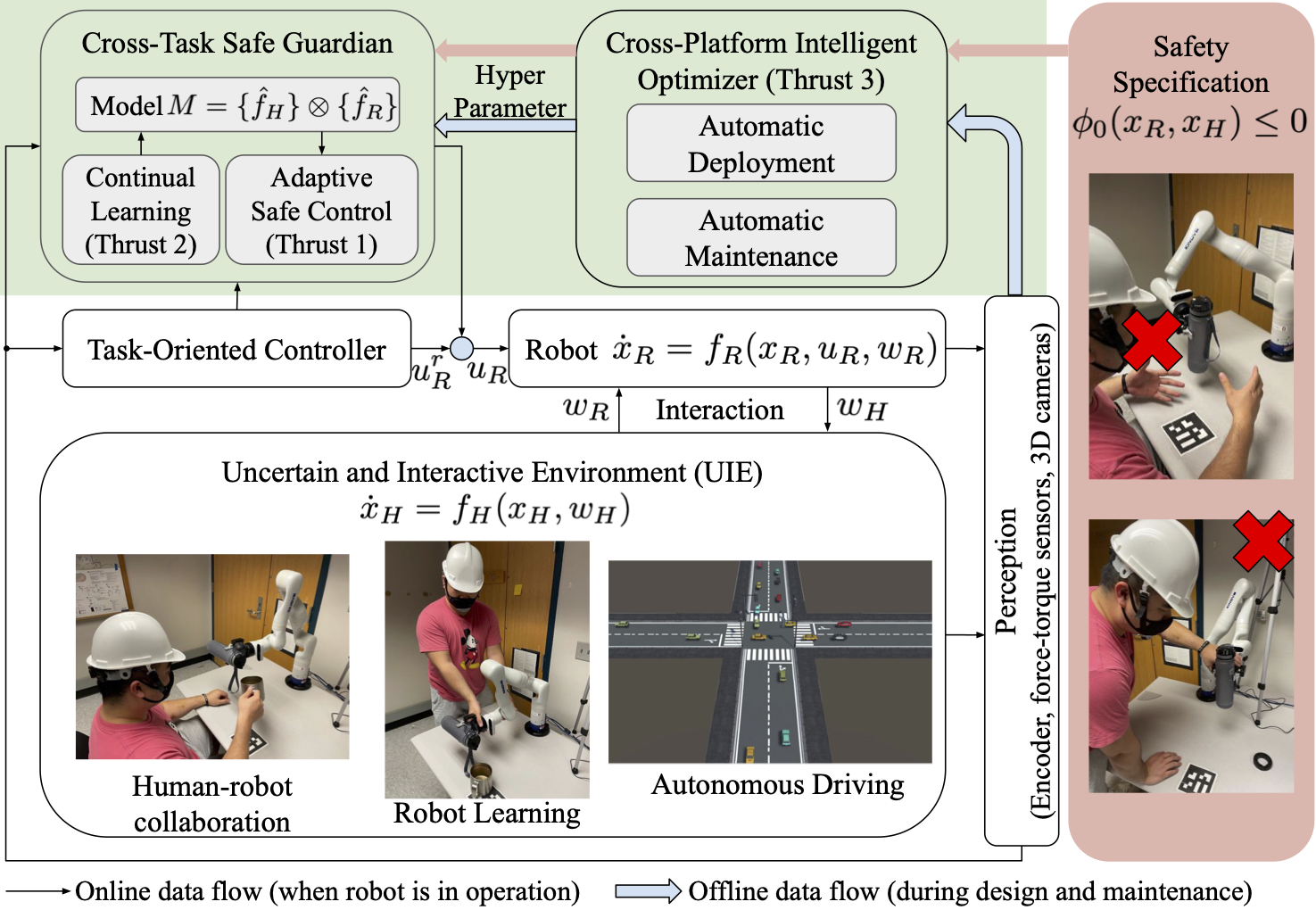

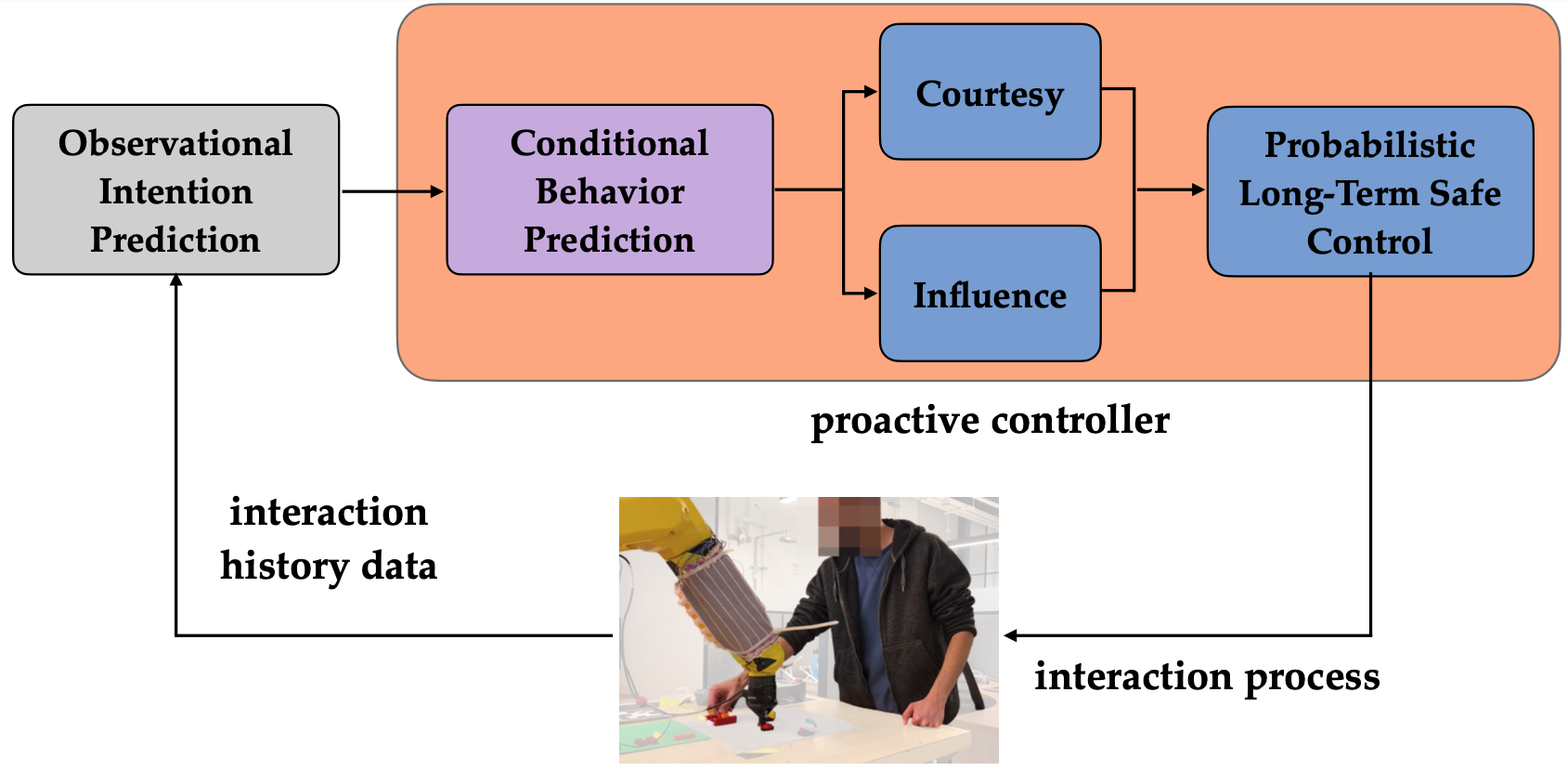

Toward Lifelong Safety of Autonomous Systems in UIE

Overview The research objective of this proposal is to study the design principles to achieve optimal lifelong safety of autonomous robotic systems in uncertain and interactive environments (UIEs). For example, this capability will enable industrial collaborative robots to safely and optimally work with unfamiliar human workers in novel tasks throughout...

Read More

Sponsor: National Science Foundation

Period of Performance: 2022 ~ Now

Real-Time Systems for Human-Robot Interaction and Industrial Metaverse

Overview: Many industrial tasks nowadays require machines to be flexible, i.e., they should be able to 1) understand the changing environments and tasks 2) generate corresponding actions in real time. For example, for machine tending, the manipulators should generate actions regarding the real-time configuration of the materials, which needs to...

Read More

Sponsor: Siemens

Period of Performance: 2021 ~ Now

Point of Contact: Yifan Sun

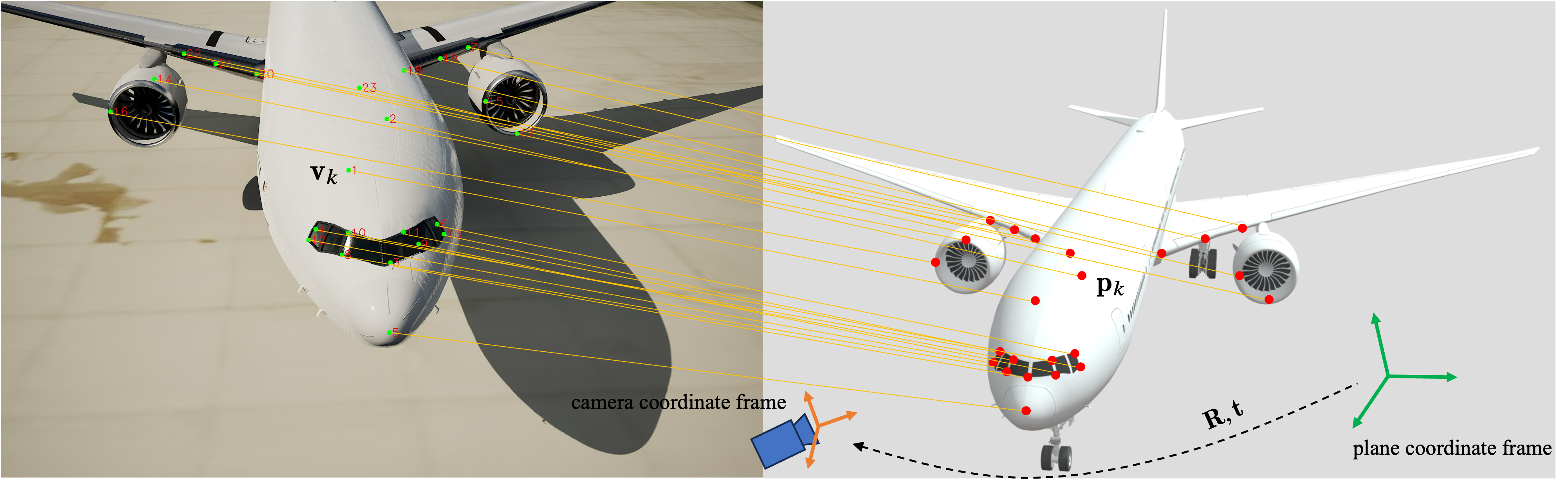

Enhancing and Verifying the Robustness of Learning-based Systems

Overview: Deep Neural Networks (DNNs) play a crucial role in many autonomous systems, particularly in safety-critical applications like autonomous driving. Consequently, it is essential for these learning-based systems to be reliable when making decisions or supporting human decision-making. In this project, we focus on robustness as a key property—ensuring that...

Read More

Sponsor: The Boeing Company

Period of Performance: 2023 ~ 2024

Point of Contact: Xusheng Luo, Tianhao Wei

Automatic Onsite Grinding of Large Complex Surfaces

Polishing and grinding of metallic parts is an important manufacturing operation in many industrial applications. It remains challenging to polish large complex surfaces. Polishing is predominantly done manually which is very time consuming, expensive and more importantly, can be a safety hazard for human operators using hand-held devices. We propose...

Read More

Sponsor: Advanced Robotics for Manufacturing Institute

Period of Performance: 2019 ~ 2024

Point of Contact: Weiye Zhao

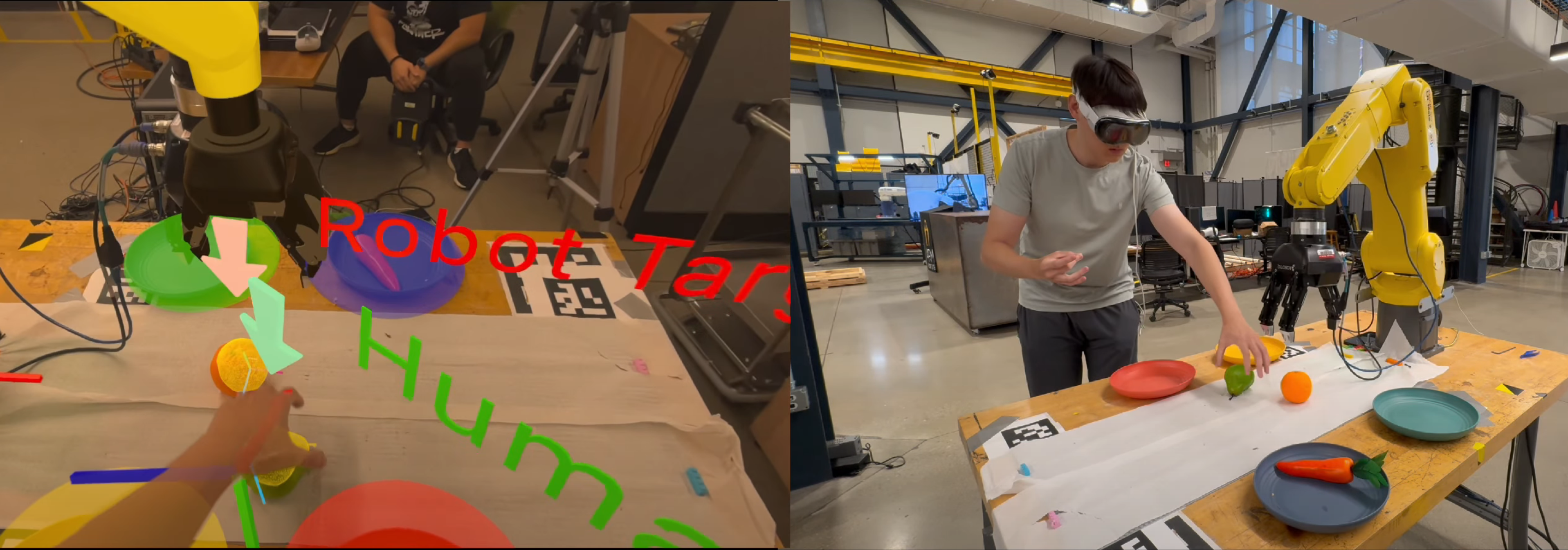

Proactive Safe Human-Robot Interaction

Overview: As robots become more common in industrial manufacturing, social, and home environments, it is imperative that they seamlessly and safely collaborate with humans. This would allow us to take advantage of the speed and precision of robots as well as the flexibility of humans for completing tasks. In this...

Read More

Sponsor: CMU Manufacturing Futures Institute

Period of Performance: 2022 ~ 2023

Point of Contact: Ravi Pandya

Safe Uncaged Industrial Robots

Safe operation of intelligent robots in interactive environments depends on accurate prediction of others and consequent safe control of the ego robot. However, it remains challenging to 1) generate high-fidelity prediction of humans; 2) soundly verify the uncertainty associated with the prediction; and 3) incorporate the prediction and the verified...

Read More

Sponsor: Ford Motor Company

Period of Performance: 2020 ~ 2022

Point of Contact: Ruixuan Liu

Hierarchical Motion Planning for Efficient and Provably Safe HRI

Safe and efficient robot motion planning is critical to ensure desired human-robot interactions. However, there are very few methods that can comprehensively address uncertainties in human behaviors, robot model mismatch, robot computation limits, and measurement and actuation noises in an integrated planning theme. This proposal targets to develop a planning...

Read More

Sponsor: Amazon Research Award

Period of Performance: 2020 ~ 2021

Point of Contact: Weiye Zhao

6DoF Robot Assembly Station of Consumer Electronic Production

Assembly of consumer electronics in manufacturing is a time-consuming and labor-intensive task. Assembly is one of the most important robot application in computer, communication, consumer electronic (3C) production. Programing a traditional industrial robotic manufacturing system requires a significant amount of time and resources (and therefore investment). This makes it difficult...

Read More

Sponsor: Efort

Period of Performance: 2019 ~ 2021

Point of Contact: Rui Chen

Adaptable Behavior Prediction for Autonomous Driving

In highly interactive driving scenarios, accurate prediction of other road participants is critical for safe and efficient navigation of autonomous cars. Prediction is challenging due to the difficulty in modeling various driving behavior, or learning such a model. The model should be interactive and reflect individual differences. Imitation learning methods...

Read More

Sponsor: Holomatic

Period of Performance: 2019 ~ 2019

Point of Contact: Tianhao Wei

Past Projects

Projects List 2018~2018 Verification of Deep Neural Networks and Systems with NN Components 2018~2018 Micro to Macro Traffic Management and Modeling with Autonomous Vehicles 2017~2021 Safe and Efficient Robot Collaboration System (SERoCS) 2014~2017 Robustly-Safe Automated Driving (ROAD) Systems 2013~2017 Robot Safe Interaction Systems (RSIS) for Intelligent Industrial Co-Robots Verification of...

Read More

Period of Performance: 2012 ~ 2018